High Availability is attainable for businesses of any size

As more and more enterprises, their clients and end-users classify applications as “mission-critical”, the financial risks associated with system downtime continue to escalate. In May 2013, the Aberdeen Group performed a comprehensive survey and found that the average cost per hour of downtime was more than $163,000 US dollars*. With profit margins and brand reputations on the line, small businesses and large enterprises alike must recognize the importance of ensuring maximum availability for all critical applications.

And how much downtime is too much?

Generally speaking, downtime is the term used in reference to periods of system unavailability. It can be caused by an incredible number of factors, from human error to power outages, network outages, system failures, code roll-outs and more. But regardless of the cause, how much is too much? What percentage of Uptime are you trying to achieve? Consider this chart that evaluates different uptime levels expressed using the common 9's:

| Uptime Percentage | Average Annual Downtime |

|---|---|

| 99% | 87 hours, 40 minutes |

| 99.9% | 8 hours, 46 minutes |

| 99.99% | 52 minutes, 36 seconds |

| 99.999% | 5 minutes, 16 seconds |

| 99.9999% | 31.6 seconds |

Before deciding how to implement high availability, you should first determine what systems or applications require high availability. We highly recommend creating a comprehensive list of systems and categorizing each accordingly. You should then determine what level of availability each category deserves, because the higher the availability, the higher the cost. For example, public facing websites might have a higher priority than email servers, or a CRM. Consider all systems carefully.

Components of High Availability

There are many components to every IT implementation, and of course to every successful high availability solution. You must have redundant infrastructure in an alternate location such as servers, storage and network infrastructure, you must replicate data in anticipation of an outage to ensure synchronization, and when disaster strikes, you must be prepared to redirect traffic from the primary site to the alternate site seamlessly for your users. A true high availability solution requires implementation on various levels. Total Uptime helps complete the picture with solutions that can redirect and reroute traffic.

Key Solutions: From SMB to Fortune 100, we fit every need and budget

At Total Uptime, our expertise is at the network and traffic routing level – the outermost layer where clients or users connect and must be diverted from a primary site to a secondary, tertiary or other location during an outage. There are two critical solutions we offer that can drastically increase application availability and reduce downtime at this level.

High Availability using DNS

Our Cloud DNS service ensures that DNS is not the weak link. Many organizations overlook DNS because of its simplicity. But if DNS were the single reason for application downtime, would you reconsider? Some of the world’s largest organizations have been affected by DNS downtime caused by their ISPs such as AT&T, or domain registrars like Network Solutions and GoDaddy. If you rely on providers like these whose core business is not DNS uptime, you’re at risk.

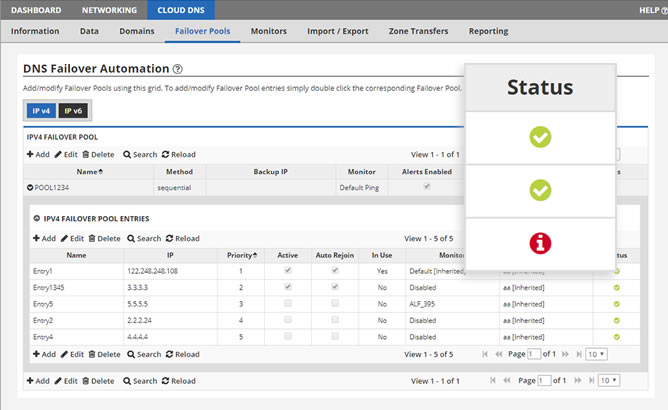

By utilizing Total Uptime’s Cloud DNS service, you can be assured that DNS will not be the weak link. You will also gain security and management capability that you’ve not enjoyed to date. Plus, you can even use DNS Failover to automate the re-routing of traffic from one site to another.

Learn about DNS

Network-Based High Availability

When traffic needs to be seamlessly redirected from a primary datacenter (or site/server) to a secondary or disaster recovery location, how will you make the switch? You could make a DNS change, or even automate it with our DNS Failover solution, but if you need something faster than a DNS change or something that doesn’t require an IP address change for those ultra-critical applications, consider Cloud Load Balancing. In addition to active/active, it supports active/passive or primary/secondary configurations too, and can even automate traffic redirection or wait until you manually fail over.

Learn about Network Failover